- 20-03-2026

- Artificial Intelligence

Researchers have developed a new method that enables AI models to explain their predictions using human-understandable concepts. This approach improves transparency in computer vision systems, helping scientists and engineers better understand how AI reaches its decisions.

Artificial intelligence models, particularly those used in computer vision, are often highly accurate but difficult to interpret. In many critical applications such as healthcare, autonomous driving, and safety monitoring, understanding the reasoning behind an AI decision is essential for building trust and ensuring safe deployment.

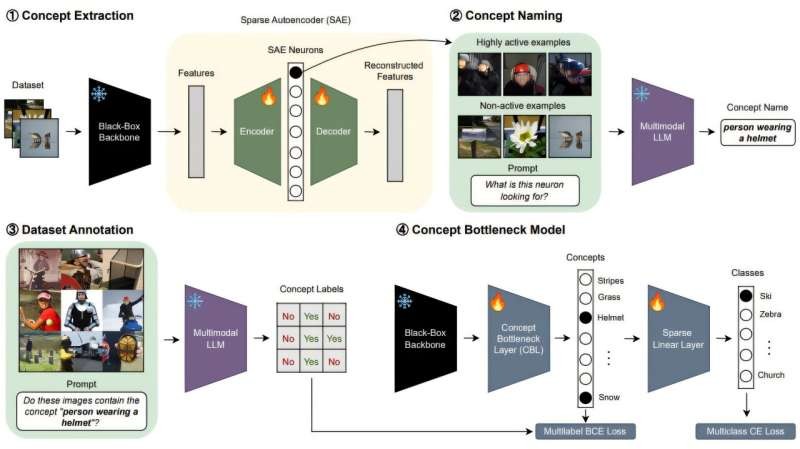

One approach designed to improve transparency is known as concept bottleneck modeling. In this method, AI models make predictions using intermediate concepts that humans can interpret, such as specific patterns or recognizable visual features in images.

However, these concepts are typically predefined by human experts. If the selected concepts do not fully represent the information used by the model, this can limit the system’s performance and its ability to accurately explain decisions.

To overcome this limitation, researchers developed a new technique that automatically extracts the concepts the AI model has already learned during training. The system analyzes the internal structure of a trained model and converts its learned representations into human-understandable explanations.

This approach allows existing computer vision systems to become more interpretable without requiring them to be retrained from scratch. By revealing how predictions are generated, it helps researchers verify whether AI systems rely on meaningful patterns rather than unintended correlations.

As artificial intelligence continues to expand into safety-critical environments, improving explainability will play an important role in ensuring transparency, accountability, and trust in AI technologies.