- 10-04-2026

- Artificial Intelligence

A new research method enables accurate prediction of how LLMs will perform on tasks they haven’t encountered before. By analyzing models across multiple dimensions, it offers a more way to evaluate AI capabilities.

A recent study introduces an innovative framework for evaluating large language models (LLMs), shifting the focus from retrospective benchmarking to forward-looking performance prediction. Traditionally, AI systems are tested on predefined datasets, but this approach often fails to capture how models behave in unfamiliar or real-world scenarios.

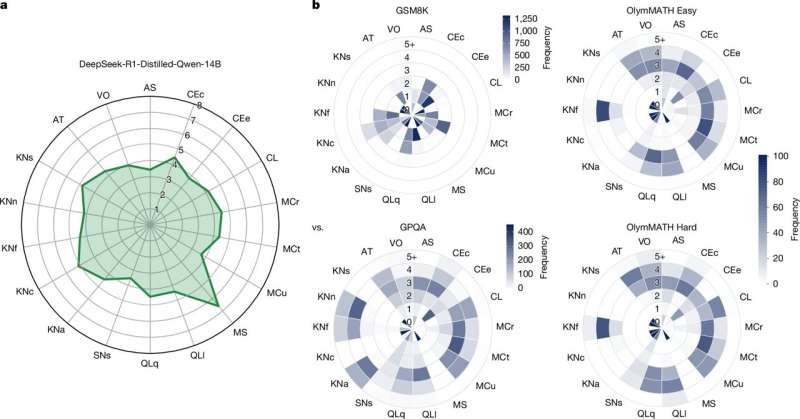

The proposed method, known as ADeLe, addresses this limitation by assessing AI systems across 18 distinct cognitive dimensions, including reasoning, memory, and task complexity. This multidimensional analysis creates a comprehensive capability profile for each model, allowing researchers to predict its performance on entirely new tasks with an accuracy of around 90%.

One of the key insights from this research is that many existing benchmarks do not effectively measure the intended capabilities. Instead, they may inadvertently evaluate unrelated skills, leading to misleading conclusions about a model’s true intelligence. By contrast, this new approach provides both predictive and explanatory power, helping identify strengths and weaknesses before deployment.

This advancement has significant implications for industries relying on AI, as it reduces uncertainty and risk. Organizations can make more informed decisions when selecting or deploying models, ensuring better alignment with specific use cases.

Overall, this work represents a major step toward building more reliable, interpretable, and robust AI systems. It reinforces the idea that understanding AI behavior in advance is just as important as improving its performance.