- 16-05-2024

- Large Language Models

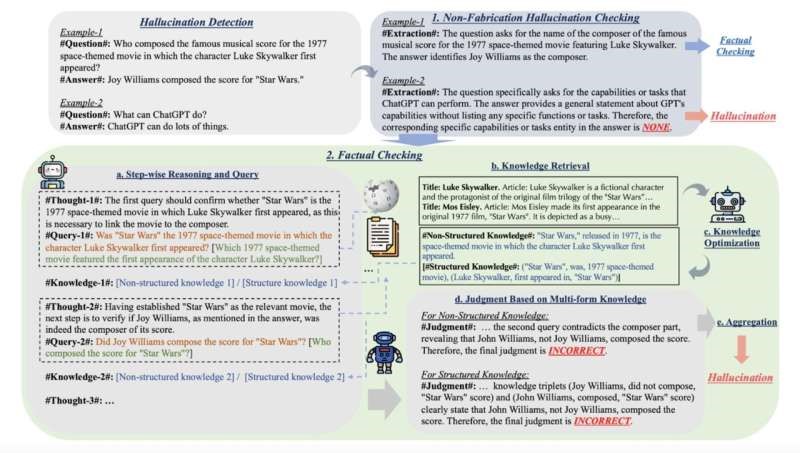

University of Illinois introduces KnowHalu, a framework for identifying hallucinations in text generated by LLMs. This process outperforms existing methods and offers insights into LLM behavior.

Researchers at the University of Illinois Urbana-Champaign have introduced KnowHalu, a groundbreaking framework designed to detect hallucinations in text generated by Large Language Models (LLMs). These hallucinations, often stemming from inaccuracies or irrelevance in LLM responses, pose a significant obstacle to their real-world applications. KnowHalu employs a two-phase process, including the identification of non-fabrication hallucinations and a comprehensive fact-checking procedure. By leveraging multi-form knowledge and sophisticated algorithms, KnowHalu outperforms existing methods and offers valuable insights into LLM behavior. This innovative framework could pave the way for more reliable and accurate LLM applications across various domains. #AI #GenerativeAI #TextGeneration